When AI Becomes a Scaffold Instead of a Shortcut

Designing for Thinking in the Age of Cognitive Offloading

One of the most important questions in AI and education is not whether students will use generative AI.

They will.

The more urgent question is:

Will AI help students think more deeply, or will it quietly replace the thinking we hoped they would do?

This question becomes especially important when we are designing learning for students with learning disabilities, ADHD, dyslexia, language processing challenges, executive function needs, or other learning differences. For these students, generative AI can be an extraordinary access tool. It can reduce cognitive load, help students begin writing, make dense texts more approachable, organize ideas, offer feedback, and support participation in work that might otherwise feel out of reach.

But the same tool that opens access can also create a new risk.

AI can become a scaffold.

Or it can become a shortcut.

And the difference is not really in the tool.

It is in the design.

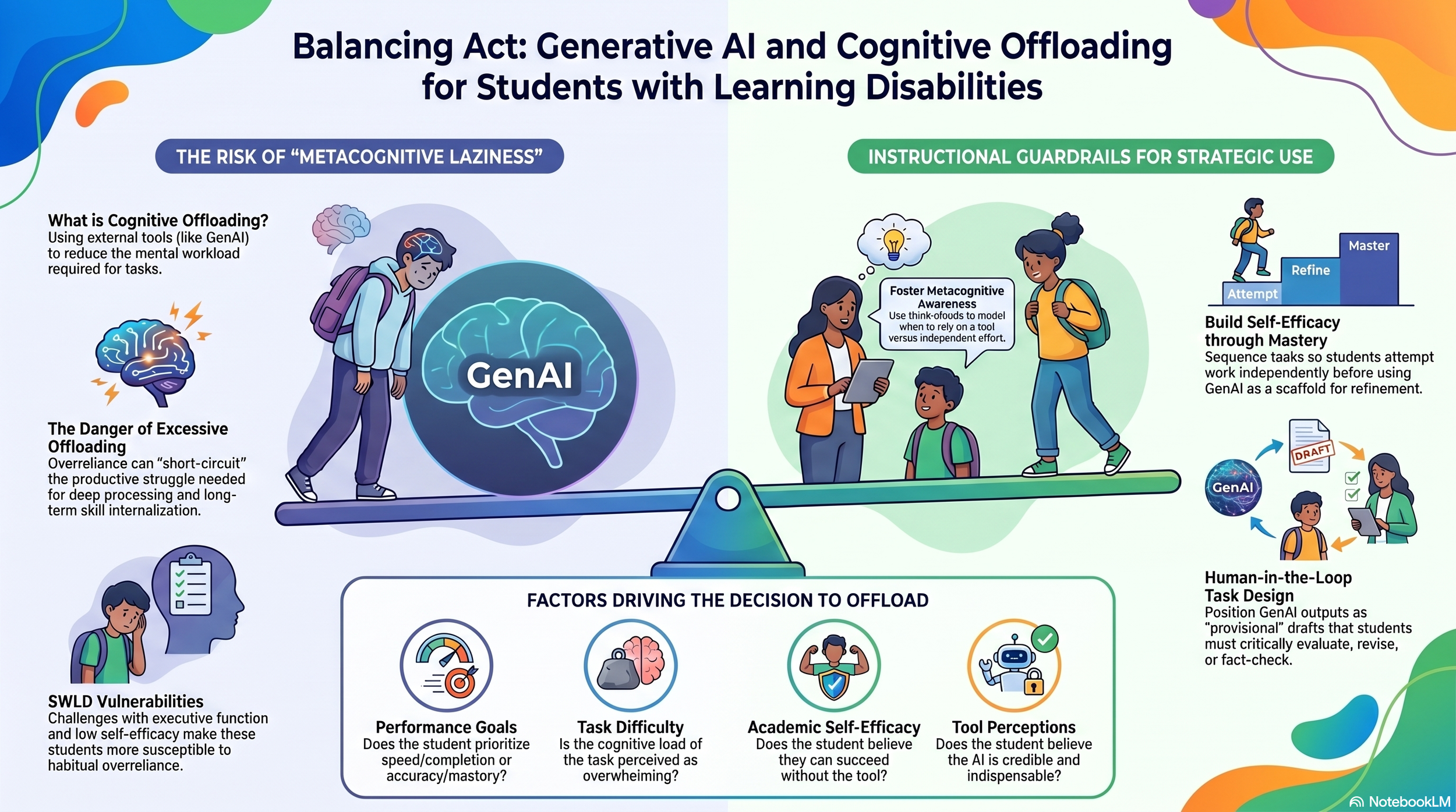

Infographic: Generative AI can either reduce barriers for students with learning disabilities or bypass the productive struggle needed for long-term learning. The instructional design determines which path students are more likely to take.

Seung and Basham’s 2026 article, Cognitive Offloading in the Age of Generative AI: What Does It Mean for Students With Learning Disabilities?, frames this tension through the concept of cognitive offloading, which means using an external tool to reduce the mental effort required to complete a task. In schools, students have always offloaded thinking in some way: calculators, graphic organizers, spellcheck, reminders, notes, audiobooks, and text-to-speech all help learners manage cognitive demands. The concern is not offloading itself. Thoughtfully designed offloading can free up cognitive space for deeper, more meaningful thinking.

The challenge is that generative AI changes the scale of what can be offloaded. It does not just help with spelling, decoding, or remembering. It can generate ideas, summarize texts, draft paragraphs, organize arguments, revise writing, and even imitate reflection. That means students may offload not only lower-level barriers, but also the very cognitive processes that build learning over time.

For students with learning disabilities, this creates a real design dilemma.

AI can reduce barriers.

AI can also bypass productive struggle.

Both things can be true.

Access Is Not the Same as Thinking

In inclusive classrooms, we often talk about access as a good thing, and it is. Students should not be blocked from sophisticated ideas because decoding is hard, working memory is overloaded, or writing fluency gets in the way of expressing complex thought.

For a dyslexic student, AI might make a difficult text more readable.

For a student with ADHD, AI might help organize scattered ideas.

For a student with dysgraphia, AI might reduce the transcription burden enough that their actual thinking can finally become visible.

These are not small supports. They can change what participation feels like.

Seung and Basham argue that generative AI can serve as compensatory cognitive offloading when it helps students reduce unnecessary cognitive load and engage in higher-order thinking. For example, AI can support reading through text leveling, summarization, vocabulary support, or graphic organizers. It can support writing through brainstorming, planning, speech-to-text, drafting support, and revision feedback. When used well, these tools can help students access the task without removing their ownership of the thinking.

But access alone is not enough.

A student can access a text summary without learning how to identify the main idea.

A student can submit an organized paragraph without learning how to organize ideas.

A student can accept AI feedback without learning how to evaluate, revise, or make decisions as a writer.

This is where the risk of excessive offloading becomes important. If AI repeatedly takes over the processes of comprehension, planning, monitoring, and revision, students may produce better-looking work while losing opportunities to build the skills underneath it.

That is the danger of mistaking performance for learning.

Why Some Students May Be More Vulnerable to Over-Offloading

One of the strongest ideas in the article is that students do not make AI-use decisions in a vacuum. They make them through a kind of internal cost-benefit analysis.

How hard does this task feel?

How much effort will it take?

Do I think I can do it?

Do I trust the tool more than I trust myself?

Will the teacher value the process, or only the finished product?

Seung and Basham describe cognitive offloading as a value-based decision-making process, shaped by performance goals, task difficulty, academic self-efficacy, and perceptions of the tool.

This matters deeply for students with learning disabilities.

If a student has a long history of school feeling hard, embarrassing, slow, or exhausting, they may not experience AI as an optional support. They may experience it as survival.

That student may turn to AI too quickly, not because they are lazy, but because they have learned to doubt their ability to begin without help.

That is why I keep coming back to this question:

Is AI helping the student do the thinking, or is it protecting the student from having to try?

Those are not the same thing.

And for neurodivergent learners, the answer will not always be obvious.

The Productive Struggle Zone

The goal is not to make learning harder for the sake of hardness.

The goal is also not to remove every struggle.

The goal is to design learning so students remain in what I think of as the productive struggle zone: the space where challenge is real, but not overwhelming; where support is available, but not replacing the learner; where students still have to make meaning, evaluate ideas, and build confidence through their own effort.

Your NotebookLM slide deck captures this tension well: the outcome is not determined by the software. It is determined by the design of the cognitive offloading.

That line feels central.

Because in schools, we sometimes ask the wrong question.

We ask:

Should students use AI for this?

A better question might be:

What thinking must remain human in this task?

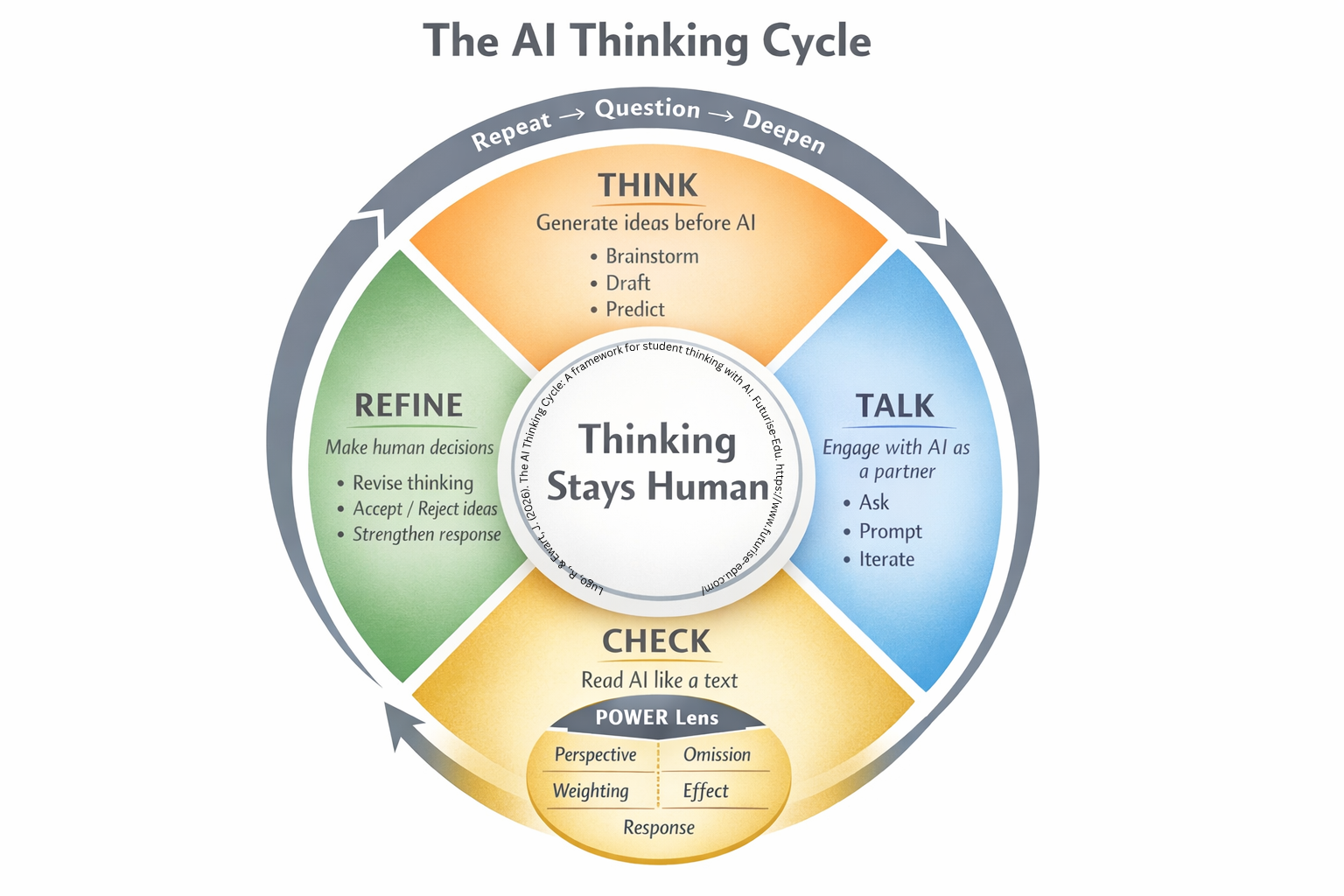

That is where my AI Thinking Cycle comes in.

The AI Thinking Cycle: Keeping Thinking Human

The AI Thinking Cycle is built around one central principle:

Thinking stays human.

The cycle asks students to move through four phases:

The AI Thinking Cycle: A student-facing framework for using AI without removing the learner from the center of the task.

1. Think

Students generate ideas before AI enters the process.

They might brainstorm, draft, predict, notice, or name what they already understand. This matters because students need evidence that their minds can begin the work.

2. Talk

Students engage with AI as a partner, not an authority.

They ask, prompt, clarify, and iterate. The AI becomes part of the conversation, but it does not become the source of truth.

3. Check

Students read AI output like a text.

This is where the POWER Lens becomes essential. Students examine:

Perspective: Whose viewpoint is centered?

Omission: What is missing?

Weighting: What is emphasized or minimized?

Effect: How does this output shape understanding?

Response: What should I do with this information?

This phase matters because AI literacy is not just knowing how to prompt. It is knowing how to question.

4. Refine

Students make human decisions.

They revise their thinking, accept or reject suggestions, strengthen their response, and decide what belongs in the final product.

Then the cycle repeats.

Question.

Deepen.

Revise.

This structure keeps AI from becoming a one-step answer machine. It turns AI use into a visible thinking process.

Guardrails Are Not Restrictions. They Are Design Moves.

When people hear “guardrails,” they often think of rules, bans, or compliance.

But instructional guardrails are different.

They are design choices that protect learning.

Seung and Basham identify several important guardrails for supporting strategic AI use with students with learning disabilities: fostering metacognitive awareness, teaching AI literacy, building academic self-efficacy, and designing tasks intentionally so students remain active thinkers.

In practice, that might look like:

Before AI:

“What is the goal of this task?”

“What part do I need to try myself first?”

“What do I already know?”

During AI:

“Is AI supporting my thinking or replacing it?”

“What did I ask it to do?”

“Do I understand the response?”

After AI:

“What did I change?”

“What did I reject?”

“What could I do with less support next time?”

This is where metacognition becomes more than a buzzword. Students need language for noticing their own dependency, confidence, confusion, and decision-making.

They need to be invited into the conversation.

Not just told, “Use AI responsibly.”

Students need to understand what responsible use feels like in the middle of a task.

The Most Important Guardrail: Student Agency

For me, the most important part of this conversation is student agency.

Students should not simply be handed AI rules created by adults who are afraid of the tool.

They should be part of asking:

When does this help me learn?

When does this make me feel capable?

When does this make me dependent?

When does this help me access ideas I could not reach before?

When does this make me skip the part where I actually grow?

This is especially important for students with learning differences. Too often, adults make decisions about support without helping students understand the purpose of the support. But if we want students to become independent learners, they need to learn how to make decisions about scaffolds themselves.

A scaffold should not just help students complete this assignment.

A scaffold should help students understand themselves as learners.

A Futurise-Edu Design Rule

Here is the design rule I keep coming back to:

AI should reduce barriers, not remove the learner.

That means we can use AI to support access, but we must still design for:

student initiation

visible thinking

evaluation of output

reflection on tool use

revision and decision-making

gradual release of support

The question is not whether AI belongs in classrooms.

The question is whether our learning designs are strong enough to keep students cognitively present while they use it.

Because the future of learning will not be protected by pretending AI does not exist.

It will be protected by designing tasks where thinking cannot be outsourced.

Closing Reflection

Generative AI has made an old educational tension more visible.

Students have always needed support.

Students have always needed challenge.

Students have always needed adults who could tell the difference between a scaffold and an escape hatch.

AI raises the stakes because it can look like learning even when learning has been bypassed.

So as educators, we need to design with more precision.

Not less AI.

Not more AI.

Better learning design.

Learning design that asks students to think first, talk with the tool, check its output, refine their ideas, and remain the authors of their own understanding.

Because access matters.

But access to information is not the same as access to thinking.

And in the age of AI, who gets to think is now a design decision.